The AI Experimentation Freeze: Why Risk Aversion Is Quietly Killing Your Innovation Pipeline

May 7, 2026

The Experiment Nobody Approved

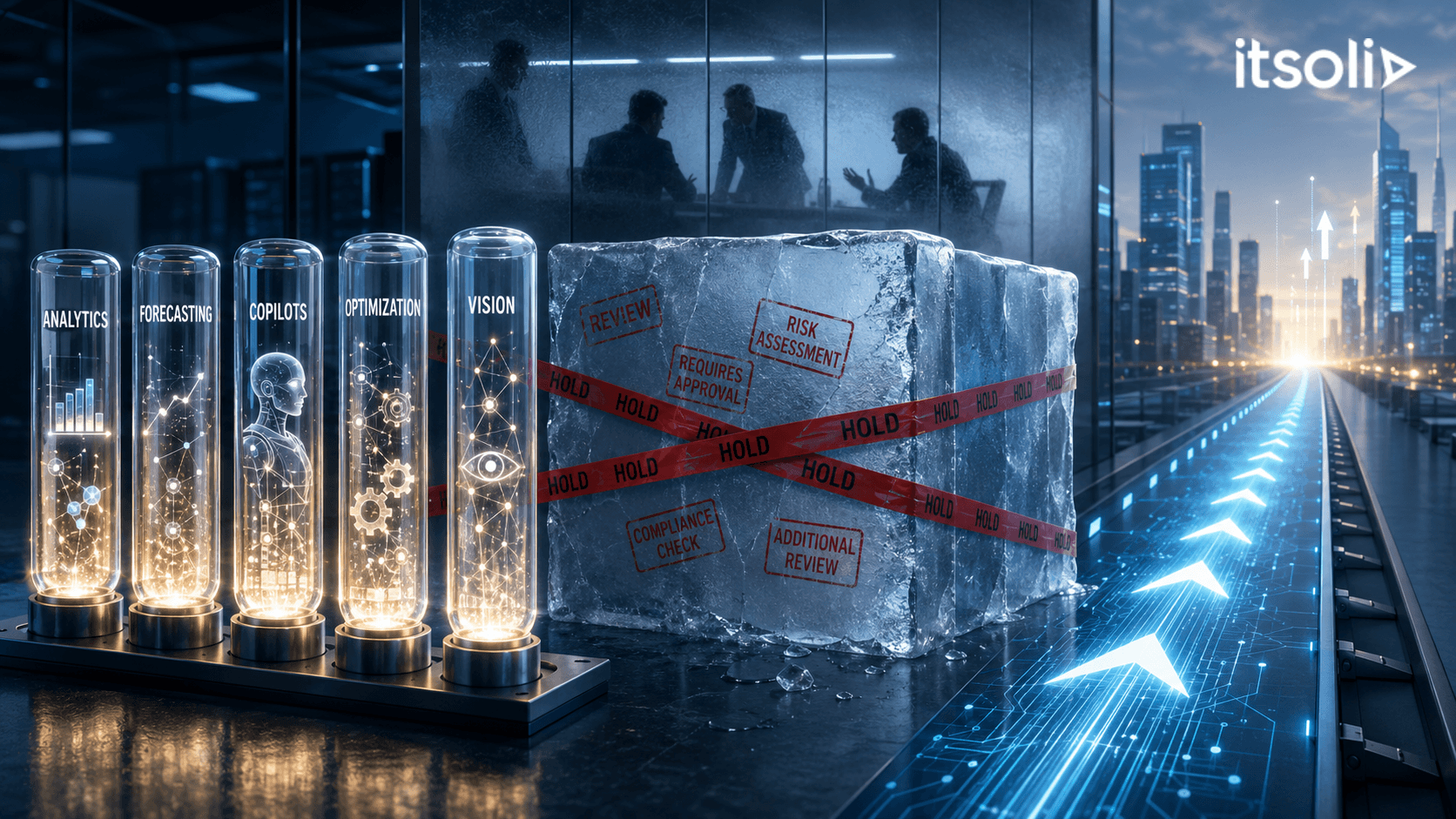

Your data science team has ten AI use case proposals ready to develop. Each has a clear business hypothesis, a defined dataset, and a measurable success metric.

In the last six months, zero have started.

Each proposal has cleared the data science team's technical review. Each has a business sponsor. Each has an identified ROI. And each has been stalled at some point in the approval chain — risk review, legal review, IT architecture review, data governance committee, AI ethics board, procurement.

The average time from proposal submission to project approval: fourteen weeks. The average number of review committees involved: five.

Your competitors started seven new AI experiments this quarter.

Here is the uncomfortable truth: The organizations that win with AI are the ones that iterate fastest. Not the ones with the most rigorous approval processes. A 2024 Bain study found that companies in the top quartile of AI value creation ran 4.3 times more AI experiments per year than companies in the bottom quartile — not because they were less disciplined, but because they had built governance that enabled speed rather than governance that required it.

Slow experimentation is not safe experimentation. It is expensive inaction.

Why the Freeze Happens

The Risk Asymmetry Problem. The costs of a failed AI experiment are visible and attributable. The costs of not experimenting are invisible and diffuse. Risk committees are structurally designed to prevent visible failures. They are not designed to measure the cost of invisible inaction. This asymmetry produces systematic over-restriction of AI experimentation.

The Universal Governance Problem. Enterprise governance frameworks are often designed for production AI — consequential, customer-facing, regulated systems. When the same framework is applied to internal experiments and proofs of concept, the compliance burden is disproportionate. Requiring an AI ethics board review for an internal analytics tool experiment is governance theater.

The Precedent Paralysis Problem. Early AI incidents — a chatbot that produced inappropriate content, a model that exhibited demographic bias — create institutional memory that amplifies risk perception beyond statistical base rates. One visible failure freezes dozens of experiments that would have succeeded.

The Distributed Approval Problem. AI experiments that require sign-off from five separate committees are operating under a governance model designed for capital expenditure decisions, not innovation velocity. The number of approvals required multiplies the time to start and provides little incremental protection for experiments operating on internal data with limited business exposure.

Building Governance That Enables Speed

Create a tiered governance framework. Not all AI experiments carry the same risk. An internal analytics tool operating on aggregate sales data requires different governance than a customer-facing credit decision model. Build distinct approval pathways with proportionate requirements for each risk tier. Low-risk internal experiments should have a five-day approval pathway, not a fourteen-week one.

Establish an AI experimentation sandbox. A sandboxed environment with pre-approved data access, pre-configured tooling, and a simplified approval process enables rapid experimentation while maintaining the data and security controls that matter.

Define experiment-level guardrails instead of experiment-level approvals. Set clear rules for what constitutes an experiment: internal data only, no automated customer-facing outputs, defined rollback criteria. Within those guardrails, empower teams to self-approve. Review the guardrails quarterly, not every experiment individually.

Measure the cost of inaction. Require AI risk committees to estimate the revenue impact and competitive disadvantage of blocking a proposed experiment, alongside the risk of approving it. Making inaction visible changes the risk calculus.

The ITSoli Experimentation Architecture

ITSoli helps clients design AI governance frameworks that distinguish between experimentation governance and production governance — and apply the right level of rigor to each.

Clients who engage us for governance redesign typically reduce time-to-experiment-start from twelve weeks to under two weeks for low-to-medium risk use cases, without reducing controls on production deployments or regulated applications.

The question your risk committee should be asking is not "what could go wrong if we approve this experiment?" It is "what is the cost to the organization if we do not?"

Governance that prevents experimentation is not governance. It is organizational self-sabotage with a compliance label attached. Build governance that enables speed where speed is appropriate and applies rigor where rigor is required. That distinction is worth more than fourteen-week approval queues.

© 2026 ITSoli